- I permanently deactivated my Twitter account in January 2023. Links to the account below aren’t functional anymore.

So I’ve decided to give a go to this new-fangled web service called “Twitter,” about which the kids have been so frantic for the past couple of years.[1. You can follow me, if you wish, at @chrpistorius] To be honest, Twitter has completely passed me by so far, as I didn’t really see the sense in it when you can share links and leave short notices about ideas that have just popped into your head on Facebook just as easily.

However, this article is not supposed to be about my experiences with Twitter, but rather about something that I’ve found on Twitter thanks to @janMato: A little Python program called Wuggy, developed by folks at the Center for Reading Research of the Department of Experimental Psychology at Gent University, Belgium. This program allows users to create pseudo-words tailored to the specific sound and syllable structure of languages, e.g. to be used for cognitive research, like English ‘wug’, which is to be pluralized in the classic test. JanMato suggested it would be useful for conlanging, too.

In my last post, I had already mentioned that I sometimes use a list of generated words to choose from when I can’t think of a suitable word for some meaning off the top of my head. The list I use currently was generated with a program called kwet, based on the data gained from a rather tedious, search-and-replace heavy analysis of my language’s dictionary that I conducted last year. I’ve been meaning to program a PHP script that can do this analysis automatically (and never did), but I thought that if this program can actually analyze a dictionary you give it in order to generate similar non-words, I might give it a go anyway.

So, how do we get our own words into the program? Wuggy is built in Python, as I said, so its code should be rather straightforward (unlike PERL code, which is simply not to be read). What you need is a bunch of files:

- ./plugins/subsyllabic_yourlang.py

- ./plugins/orth/yl.py

- ./data/yourlang_dictionary.txt

Fortunately, you can just copy an existing subsyllabic_*.py and orth file and just change the name of the language in the files to something more appropriate. And you must register the module for your language in ./plugins/__init__.py by simple analogy with the other entries. The dictionary files Wuggy uses are just flat TXT files where columns are divided with a tab:

word ↦ word’s syllabification ↦ some numeric value I don’t know how to obtain but just write 1

I got this file by simply dumping the ‘pronunciation’ field of my MySQL database into a CSV file which I edited to use orthography instead of phonemic transcription (not very difficult in Ayeri) etc. to fit Wuggy’s format. In order to get Wuggy to do something, you will also need to provide some input to generate words from – I just use my dictionary file with the last field (the one with ‘1’ at the end of each line) removed.

If all prerequisites are installed and you execute Wuggy.py, there’ll be a dialog window with some options. If the creation of Yourlang’s module has worked out, you should see “Subsyllabic Yourlang” as an option in the “Language Modules” drop-down menu and if you choose Yourlang, a progress bar thing should pop up shortly, which tells you about loading and generating some values. I ran my whole dictionary shortened to unique entries (~1800) through the program this afternoon, creating 3 alternatives per word. Here’s some of the output (left original, right generated):

a-ra kri-bay

ba-br= de-bi

ban-te-b= nil-pu-r=

da-lang kra-nu=

en-van tay-tran

This doesn’t look bad so far, but annoyingly, it would recreate the same words dozens of times for the same or similar original syllables even with the dissimilarity from the original word set to 1 out of 3. Also, this occurred frequently:

bu-rang lu-u=

e-rar me-ik

i-lon le-in

kay-ra lē-o

ma-kim ka-os

The problem here is that Ayeri allows words to begin with vowels, but the likeliness of two vowels after another mid-word is rather small. Also, words can’t end e.g. in [p t k], and long vowels are the result of an allophonic process triggered by morphotactics rather than lexicalized. I don’t know, though, how to tweak my module files to avoid this, unfortunately.

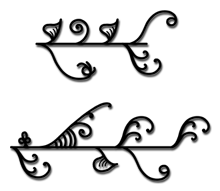

Just in case this was missed by anyone … If you’ve been following my work you may remember I used to have this Vine Script thing, which was an ornamental alphabet that took inspiration for its characters from climbing plants.

Just in case this was missed by anyone … If you’ve been following my work you may remember I used to have this Vine Script thing, which was an ornamental alphabet that took inspiration for its characters from climbing plants.